Can You Be Fired Because an Algorithm Didn’t Like Your Face?

When Technology Decides Your Job Future

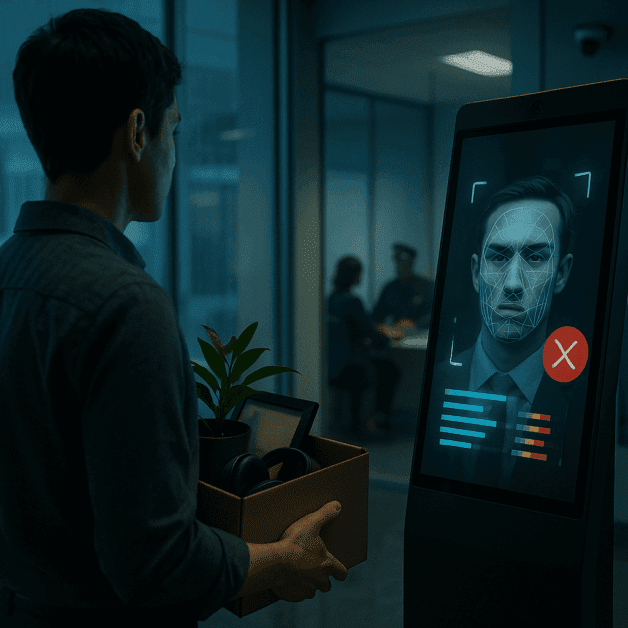

Imagine applying for a job, sitting down for a video interview, and never speaking to a single human being. Instead, a computer program watches your face, analyzes your expressions, and decides whether you are worth hiring. Sounds like science fiction? It is already happening in workplaces across the country and around the world.

Facial recognition and AI-powered hiring tools are becoming more common in the modern workplace. Companies use them to screen job candidates, monitor employee performance, and even make decisions about who gets promoted or let go. But here is the uncomfortable question that more people are starting to ask: Can you actually lose your job — or never get one — because an algorithm did not like your face?

The short answer is yes. And the consequences for workers are serious.

What Is Algorithmic Hiring and How Does It Work?

Algorithmic hiring refers to the use of computer programs and artificial intelligence to help make employment decisions. These systems can scan resumes, rank applicants, and even analyze video interviews by looking at a person’s facial expressions, tone of voice, and word choices.

Some of the most well-known tools in this space include HireVue, Pymetrics, and similar platforms used by large corporations. These programs claim to measure things like a candidate’s enthusiasm, trustworthiness, and potential for success — all from a short video clip or an online game.

Here is how a typical AI-powered interview might work:

- A job applicant records a video answering pre-set questions from home.

- The AI software scans their facial movements, micro-expressions, and body language.

- The system scores the candidate based on how closely they match the company’s “ideal employee” profile.

- A human hiring manager may never even see the video if the algorithm scores the applicant too low.

The problem is that most job seekers have no idea this is happening. And even fewer understand how these systems decide who passes and who fails.

The Very Real Problem of Algorithmic Bias

Algorithmic bias is at the heart of why these tools are so dangerous. Bias in AI does not happen by accident. It happens because these systems are trained on historical data — and history is full of discrimination.

If a company has historically hired mostly white, male candidates in certain roles, the AI learns that pattern. It then scores future applicants based on how closely they resemble that past group. This means that people of color, women, older workers, and people with disabilities can be automatically ranked lower — not because of their skills or experience, but because the algorithm was built on biased data.

A major example came in 2018 when Amazon scrapped its own AI recruiting tool after discovering it was systematically downgrading resumes that included words like “women’s” — such as “women’s chess club” — and favoring language more commonly found on male resumes. The system had been trained on resumes submitted over a ten-year period, most of which came from men.

Facial recognition technology adds another layer of concern. Research conducted by MIT Media Lab researcher Joy Buolamwini found that commercial facial recognition systems had error rates of up to 34.7% for darker-skinned women, compared to just 0.8% for lighter-skinned men. When these systems are used to evaluate job candidates, those errors do not just cause inconvenience — they can cost people real opportunities.

Employment Discrimination in the Age of AI

Employment discrimination laws in the United States were written long before artificial intelligence existed. The Civil Rights Act of 1964, the Americans with Disabilities Act, and the Age Discrimination in Employment Act were designed to protect workers from human bias. But they were never specifically written with algorithms in mind.

This creates a legal gray area that many workers and their advocates find alarming. If a person is passed over for a job because an AI gave them a low score, who is responsible? The company? The software developer? The algorithm itself?

Currently, employers are still legally responsible for the tools they use to make hiring decisions. Under a legal concept called “disparate impact,” an employer can be found guilty of discrimination even if they did not intend to discriminate — as long as their practices have a discriminatory effect on a protected group. This means AI hiring tools are not automatically off the hook just because a human did not directly make the call.

However, proving that an algorithm discriminated against you is incredibly difficult. Most companies keep their AI systems proprietary, meaning their inner workings are hidden from the public. Workers rarely know they were evaluated by an AI, let alone how it scored them or why.

Can You Be Fired by an Algorithm?

Algorithmic decision-making does not stop at hiring. Once you are employed, AI systems may continue to monitor and evaluate you — sometimes with serious consequences.

Gig workers are particularly vulnerable. Amazon warehouse workers, for example, have reported receiving automated warnings and even termination notices generated entirely by software that tracks their productivity rates. Delivery drivers for Amazon Flex have been fired by algorithms that flagged them for supposed rule violations, with little to no human review of the decision.

Uber and Lyft drivers have also experienced what some call “robo-firing” — being deactivated from the platform based on automated systems that analyze ride completion rates and passenger ratings, without meaningful human oversight or a clear appeals process.

Office workers are not immune either. Many companies use software to track employee keystrokes, monitor screen activity, and log how much time workers spend on different tasks. These data points can feed into performance evaluations, decisions about promotions, and ultimately, decisions about who gets laid off.

Who Is Most at Risk?

While algorithmic bias can affect anyone, certain groups face a higher risk of harm:

- People of color: Facial recognition systems consistently perform worse on people with darker skin tones, leading to misidentification and unfair evaluations.

- Women: AI systems trained on male-dominated workforces often replicate gender bias in hiring and promotion decisions.

- Older workers: Algorithms may screen out applicants over a certain age based on patterns in data, even when experience should be a valuable asset.

- People with disabilities: AI systems that analyze facial expressions, voice, and body language may flag people with certain disabilities as poor candidates simply because they communicate differently.

- Workers with accents: Voice analysis tools can penalize non-native speakers or people with regional accents, even when their language skills are perfectly adequate for the job.

What Are Your Workplace Rights?

Understanding your rights in the face of algorithmic employment decisions is not easy, but it is important. Here is what workers should know:

You May Have the Right to Know

Some states and cities are beginning to pass laws that require companies to disclose when AI is being used in hiring. Illinois was the first state to require employers using AI video analysis tools to inform candidates and get their consent. New York City now requires employers to audit AI hiring tools for bias and notify candidates when such tools are used.

You Can Challenge Discriminatory Outcomes

If you believe you were passed over for a job or fired due to discrimination — whether by a human or an algorithm — you have the right to file a complaint with the Equal Employment Opportunity Commission (EEOC). The EEOC has stated that AI tools used in hiring are subject to existing anti-discrimination laws, and it has been actively looking into this space.

You Can Request Explanations

In some situations, especially in states with stronger privacy laws, workers may be able to request information about how decisions affecting their employment were made. The California Consumer Privacy Act (CCPA), for example, gives residents certain rights over their personal data, which could include data collected during an AI-driven hiring process.

You Can Opt Out — Sometimes

In states where consent is required before AI video analysis, you technically have the right to refuse. However, exercising that right may mean being excluded from the hiring process altogether, which creates its own unfair catch-22 for workers.

What Is Being Done About It?

Awareness of algorithmic bias is growing, and so are efforts to hold companies accountable.

In 2023, the EEOC released guidance specifically addressing the use of AI in employment, warning companies that they cannot escape discrimination liability by outsourcing decisions to technology. The Federal Trade Commission (FTC) has also weighed in, flagging concerns about AI tools that make consequential decisions about people’s lives without transparency or fairness.

Lawmakers at both the state and federal level are pushing for stronger regulation of AI hiring tools. Proposed legislation would require companies to conduct bias audits, disclose AI use to candidates, and provide workers with meaningful ways to appeal automated decisions.

Civil rights organizations and advocacy groups are also filing lawsuits and pushing for greater accountability. The fight is ongoing, but momentum is building.

What Can Workers Do Right Now?

Until stronger protections are in place, workers can take some practical steps to protect themselves:

- Ask questions: When applying for a job, ask whether AI tools are used in the hiring process. Some companies will tell you.

- Read the fine print: Before completing a video interview or online assessment, read the consent forms carefully to understand what data is being collected and how it will be used.

- Document everything: Keep records of your job applications, interview invitations, and any communication you receive. This documentation can be important if you need to file a complaint later.

- Know your rights by location: Workers in Illinois, New York City, and other jurisdictions with AI-specific laws have additional protections. Stay informed about the laws in your area.

- Reach out for help: If you believe you have been discriminated against, contact the EEOC, a workers’ rights organization, or an employment attorney to explore your options.

The Bottom Line

Technology is changing the workplace faster than the law can keep up. Facial recognition tools and AI-driven hiring systems are already making decisions that affect millions of workers’ livelihoods — and many of those decisions are being made in ways that are unfair, opaque, and sometimes downright discriminatory.

The fact that a computer program is making the call does not make it any less of a human rights issue. Employers still have a responsibility to ensure their hiring and management practices are fair. And workers deserve to know when an algorithm is judging them — and to have a real way to fight back when it gets it wrong.

As AI continues to spread through the workplace, the conversation about employment discrimination, algorithmic bias, and workplace rights is one that every worker, employer, and lawmaker needs to be part of. The stakes are too high to ignore.