Can the Government Delete Your Face From an AI Model? The Surprising Answer

What Happens When Your Face Ends Up in an AI Database?

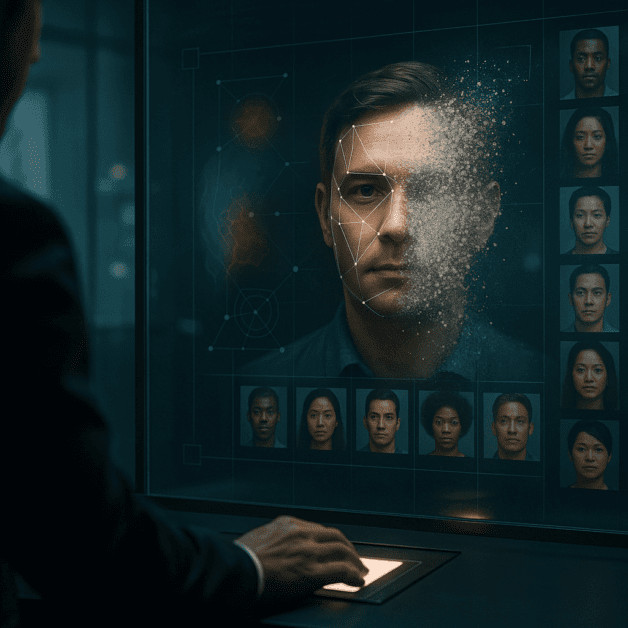

Imagine finding out that your photo has been used to train a facial recognition system without your permission. You never signed a consent form. You never agreed to anything. Yet somewhere, deep inside an AI model, your face exists as a data point helping machines learn to identify people.

This is not a hypothetical situation. It is happening right now, to millions of people around the world. And the big question on everyone’s mind is simple: can the government actually do something about it? Can they force companies to delete your face from these AI systems?

The surprising answer is: it depends. And the full picture is more complicated than most people realize.

How Your Face Gets Into AI Training Data in the First Place

Before diving into what governments can or cannot do, it helps to understand how facial recognition models are built. These systems do not just appear out of thin air. They are trained on enormous collections of images, often gathered from the internet.

Here are some of the most common ways your face could end up in an AI training dataset:

- Social media scraping: Companies collect publicly available photos from platforms like Facebook, Instagram, and Twitter.

- Photo sharing websites: Sites like Flickr have been used as sources for large image datasets.

- Security camera footage: In some cases, footage from public surveillance systems has been used.

- Purchased data: Some companies buy image libraries from third-party data brokers.

- News and media archives: Publicly available news photographs have been included in training sets.

Once your image is inside a trained AI model, it does not sit there like a photo in a folder. Instead, it becomes part of the mathematical structure of the model itself. This is a key point that makes government action incredibly difficult.

What Government Authority Actually Looks Like

Governments do have tools they can use when it comes to facial recognition and AI training data. However, those tools vary enormously depending on where you live.

In the United States

The United States does not have a single federal privacy law that specifically addresses AI training data. Instead, regulation is fragmented across different states and different sectors. Illinois stands out with its Biometric Information Privacy Act, known as BIPA. This law requires companies to get written consent before collecting biometric data, including facial geometry. Violations can lead to significant fines.

Several cities, including San Francisco and Boston, have gone a step further by banning government use of facial recognition technology altogether. But these bans apply to how governments use the technology, not necessarily to private companies building AI models.

The Federal Trade Commission has taken action against some companies for deceptive data practices, but there is no clear legal pathway that forces an AI company to delete your specific face from a trained model.

In the European Union

The European Union has much stronger privacy protections. The General Data Protection Regulation, commonly known as GDPR, gives citizens the right to request that their personal data be deleted. This is often called the “right to be forgotten.”

Under GDPR, facial images are considered biometric data and are treated as a special category requiring extra protection. Companies operating in the EU must have a legal basis for processing such data, and individuals can challenge that processing.

However, even the GDPR runs into a serious technical problem when it comes to AI models, which we will discuss shortly.

In Other Parts of the World

Countries like China have their own AI governance frameworks, though privacy rights work very differently there. Brazil, Canada, and several other nations are working on updated privacy laws that try to address AI specifically. The global picture is a patchwork of rules with no consistent international standard.

The Technical Problem Nobody Talks About Enough

Here is where things get genuinely surprising. Even if a government orders a company to delete your face from an AI model, doing so is technically very difficult. In some cases, it may be nearly impossible without rebuilding the model from scratch.

When a machine learning model is trained, it does not store individual photos like a photo album. Instead, it adjusts billions of mathematical values, called parameters, based on patterns it finds across millions of images. Your face contributes to those patterns, but it is not stored as a separate file that can simply be deleted.

Researchers are actively working on a concept called machine unlearning. This is the process of removing the influence of specific training data from an already-trained model. It sounds straightforward, but in practice it is enormously complex. Some approaches require retraining the entire model without the offending data, which can cost millions of dollars and take weeks of computing time.

Other approaches try to approximate unlearning by fine-tuning the model, but critics argue these methods do not truly erase the data’s influence. The model may still retain some memory of what it learned from your face, even if imperfectly.

This creates a strange legal situation. A company might technically comply with a government deletion order while the practical effect of that deletion is minimal or unverifiable.

Real Cases Where Governments Have Tried to Act

There have been real-world examples of government action that shed light on how this plays out in practice.

Clearview AI

Clearview AI is one of the most well-known examples. The company built a facial recognition database by scraping billions of photos from the internet. It then sold access to law enforcement agencies.

Multiple governments pushed back hard. In Australia, the Privacy Commissioner ordered Clearview to delete all images of Australian citizens and stop collecting data from Australian sources. In the UK, the Information Commissioner’s Office issued a fine of over seven million pounds. Canada declared the company’s practices illegal.

Clearview largely ignored or contested these rulings, arguing that it was not subject to those jurisdictions because it was a US-based company. The cases dragged on through legal systems for years. This shows that even when governments try to act, enforcement across borders is a major obstacle.

IBM’s Diversity in Faces Dataset

IBM created a dataset using photos from Flickr to help reduce bias in facial recognition systems. Many photographers and subjects were never informed their images were being used. After significant public backlash and media attention, IBM eventually discontinued the dataset. But this happened largely because of public pressure, not a direct government order.

Your Privacy Rights Versus AI Reality

The gap between what privacy laws promise and what technology actually allows is wide. Here is a straightforward breakdown of where rights exist and where they run into walls:

- Right to know: In many jurisdictions, you have the legal right to ask a company whether they hold your data. In practice, companies may not even know which specific images were used to train their models.

- Right to deletion: Laws like GDPR grant this right, but technical limitations make true deletion from AI models extremely difficult to verify.

- Right to consent: Some laws require consent before collecting biometric data. However, if your photo was already publicly available online, companies often argue that consent was implied.

- Right to accuracy: You may have the right to correct inaccurate data, but correcting what a model has learned about your face is not the same as correcting a record in a database.

What Is Being Done to Fix This Gap?

Governments, researchers, and advocacy groups are not sitting still. Several efforts are underway to make AI systems more accountable when it comes to training data and privacy rights.

Stronger AI-Specific Laws

The European Union passed the EU AI Act, which places facial recognition under strict regulation. High-risk AI systems, including those used for identifying people in public spaces, must meet transparency and data governance requirements. This represents the most comprehensive attempt yet to regulate AI from a government perspective.

Advances in Machine Unlearning

Tech companies and universities are investing in research to make machine unlearning more practical and verifiable. If this research succeeds, it could make government deletion orders actually enforceable in a meaningful way. Google, for example, has published research into unlearning techniques that could be applied to large models.

Data Minimization Requirements

Some new regulations are pushing for data minimization, meaning companies should only collect the data they truly need. If companies collect less facial data in the first place, the problem of removing it later becomes smaller.

Consent-Based Data Collection Platforms

Some organizations are building ethical AI training datasets where participants explicitly agree to have their images used. This does not help people whose data was already collected, but it offers a cleaner model going forward.

What You Can Do Right Now

While governments and researchers work through these challenges, there are practical steps you can take to protect yourself:

- Check your privacy settings: Review the privacy settings on social media platforms to limit who can see and download your photos.

- Submit data requests: If you live in a jurisdiction with strong privacy laws, you have the right to request that companies disclose and delete your data. Use it.

- Use tools that fight back: Researchers have developed tools like Fawkes and Glaze that subtly alter your photos so that AI systems have trouble recognizing you while humans see no difference.

- Stay informed: Privacy law is changing rapidly. Knowing your rights in your specific location can make a real difference.

- Support advocacy organizations: Groups focused on digital rights are pushing for stronger laws and better enforcement. Their work benefits everyone.

The Bottom Line

Can the government delete your face from an AI model? In theory, yes. Governments have the legal authority to order companies to remove personal data, including facial images, from their systems. Some have already done so with mixed results.

In practice, however, the technical reality makes true deletion from AI training data one of the hardest problems in modern privacy law. The models do not store faces the way you might store a photo on your phone. The influence of your data is woven into a complex mathematical structure that cannot simply be unstitched.

This is not a reason to give up on privacy rights. It is a reason to push harder for better laws, better technology, and better accountability from companies that use facial recognition. The conversation between government authority and AI capability is only just beginning, and the outcome will shape how much control you have over your own identity for decades to come.