The AI Therapy Ban – What 12 States Just Made Illegal

A New Wave of Mental Health Laws Is Sweeping the Country

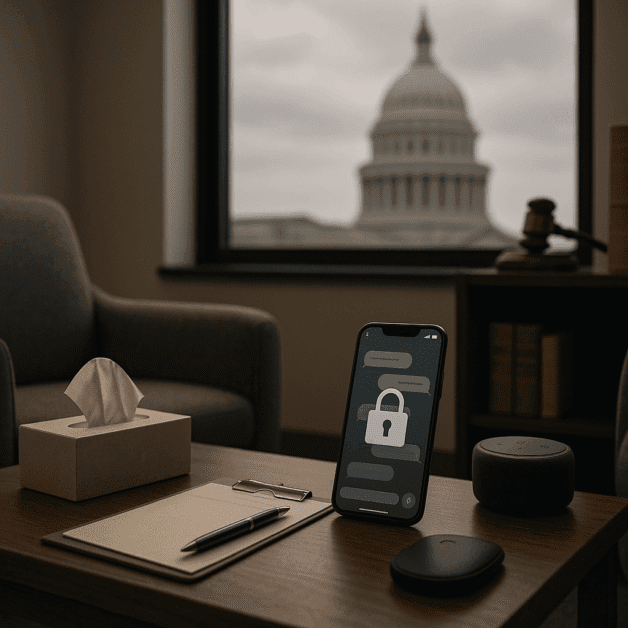

Something significant is happening in state legislatures across America. Over the past year, 12 states have passed or introduced laws that directly target AI-powered therapy tools, placing strict limits on how artificial intelligence can be used in mental health care. For millions of people who rely on apps and chatbots for emotional support, these changes could have a real impact on how they get help.

But what exactly did these states make illegal? And why now? Here is a clear breakdown of what is happening, why lawmakers took action, and what it means for everyday people.

What These States Are Actually Banning

First, it is important to understand that most of these laws are not banning AI entirely from the mental health space. Instead, they are drawing a firm line between two very different things:

- AI as a support tool — helping licensed therapists with scheduling, note-taking, or basic wellness check-ins

- AI acting as a therapist — conducting full therapy sessions, diagnosing conditions, or providing clinical treatment without a licensed human involved

The second category is what these states are cracking down on. Specifically, the laws target apps and platforms that market themselves as therapy providers while using AI chatbots to replace human professionals entirely. In several of these states, offering that kind of service without proper professional licensing is now a violation of mental health law.

Which States Are Leading the Charge

The 12 states that have moved to regulate or restrict AI therapy practices include both politically red and blue states, which tells you something about how broadly this concern is felt. While the specific details of each law vary, the states taking action include:

- California

- Texas

- Florida

- New York

- Illinois

- Ohio

- Pennsylvania

- Michigan

- Colorado

- Washington

- Arizona

- Georgia

Each state has taken its own approach, but there is a clear shared concern running through all of them — that people in genuine mental health crisis should not be left alone with a machine that has no real clinical training, accountability, or human judgment.

The Professional Licensing Problem

A major reason these laws exist comes down to professional licensing. In every state, becoming a licensed therapist, psychologist, or counselor requires years of education, supervised practice, background checks, and ongoing training. These rules exist to protect people seeking help during some of the most vulnerable moments of their lives.

AI therapy apps operate in a gray area that existing professional licensing rules never anticipated. A chatbot does not hold a license. It cannot be disciplined by a licensing board. It cannot be sued for malpractice in the same way a human therapist can. And it cannot truly read the room when someone is in serious danger.

State lawmakers argue that allowing unlicensed AI systems to provide what amounts to clinical therapy is the same as letting someone practice medicine without a degree. The new laws are designed to close that loophole by requiring that any service offering therapeutic treatment must be backed by a licensed human professional.

What Triggered This Response

This wave of state regulation did not come out of nowhere. Several high-profile incidents helped push lawmakers into action.

In 2023 and 2024, reports emerged of users in mental health crisis receiving harmful or inadequate responses from AI therapy apps. One widely publicized case involved a teenager who was reportedly encouraged by an AI chatbot in ways that worsened their situation rather than helping. Critics of the AI therapy industry pointed to these cases as proof that the technology was not ready to handle the complexity of real human suffering.

Mental health professionals had also been raising alarms for years. Organizations representing licensed therapists and counselors pushed hard for state-level regulation, arguing that commercial AI platforms were essentially practicing therapy without a license and putting vulnerable users at risk.

The Apps in the Crosshairs

Several well-known apps have found themselves in the middle of this debate. Platforms like Replika, Woebot, and others that offer emotionally supportive conversations or claim to help users manage anxiety and depression have faced growing scrutiny. Some of these apps are now facing legal questions about whether their services cross the line into what these new state laws define as therapy.

It is worth noting that not all of these apps claim to be therapy. Many market themselves as wellness tools or emotional support companions. But critics argue that when users are turning to them during mental health crises and the apps respond with clinical-style guidance, the label on the product does not really matter. What matters is what the product is actually doing.

Where AI Can Still Play a Role

Despite the restrictions, these laws are not slamming the door entirely on AI in mental health care. In fact, many mental health professionals and lawmakers see a legitimate and valuable role for AI when it is used responsibly. Here is where AI is still welcome:

- Administrative support: Helping therapists manage appointments, billing, and records

- Screening tools: Helping identify people who may need professional help and connecting them to licensed care

- Supplement to therapy: Providing between-session support under the oversight of a licensed professional

- Crisis hotline assistance: Helping route people to the right human resources quickly

- Research and training: Helping train new therapists or analyze treatment outcomes

The message from these states is not that AI is the enemy of mental health care. It is that AI should support human professionals, not replace them.

What This Means for People Who Use AI Therapy Apps

If you live in one of these 12 states and you currently use an AI-based mental health app, you may be wondering what this means for you. Here is the honest answer: it depends on the app and exactly how the local law is written.

Some apps may change their services to comply with the new rules. Others may pull back from certain states altogether. A few may add licensed human professionals to their platforms to stay within the law. Either way, users should pay attention to any updates from the apps they use and be aware of what protections are and are not in place when they seek support through digital platforms.

If you are dealing with serious mental health challenges, these laws are actually designed with your safety in mind. They are pushing toward a system where you have access to real human expertise when you need it most, rather than a well-meaning but ultimately limited algorithm.

The Bigger Picture on AI and Mental Health Law

What is happening in these 12 states is likely just the beginning. Mental health law has never had to grapple with artificial intelligence before, and the legal frameworks being built right now will shape how this technology is used for years to come.

Federal regulators are also watching closely. The FDA has already weighed in on certain digital health tools, and there are growing calls for a national standard that would apply consistent rules across all 50 states. Without that kind of unified approach, companies may simply operate from states with looser rules while serving users in states with stricter ones.

For now, state regulation is filling the gap. And while the details are complicated, the core question these laws are trying to answer is actually very simple: when someone reaches out for help with their mental health, do they deserve a real human being on the other end?

These 12 states have answered that question with a clear yes.

The Bottom Line

The movement to regulate AI therapy reflects a growing recognition that mental health care is too serious to leave entirely in the hands of technology. Professional licensing requirements exist for good reasons, and those reasons do not disappear just because the service is delivered through a smartphone screen.

As AI continues to develop and as more people turn to digital tools for mental health support, the legal landscape will keep evolving. Staying informed about the rules in your state is more important than ever — both for users seeking help and for companies building the tools that aim to provide it.