The Autonomous Vehicle Crash — Who’s Actually Liable Under 2026 Rules

When a Self-Driving Car Crashes, Who Pays?

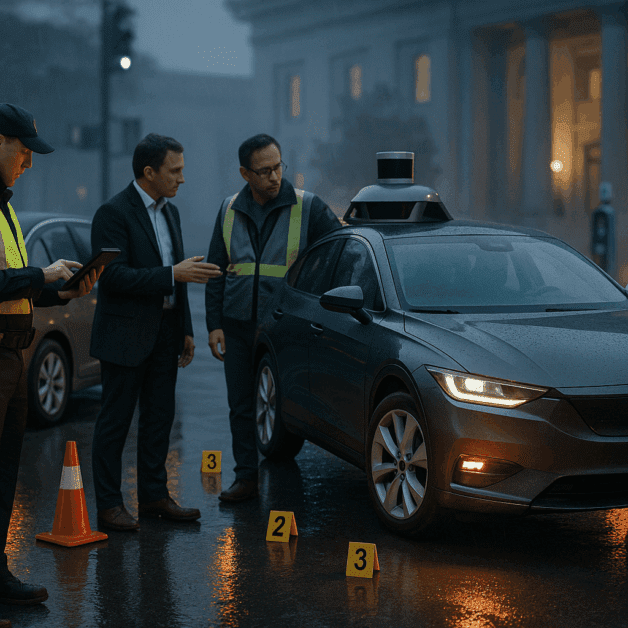

Autonomous vehicles promised to make roads safer. Fewer human errors, faster reaction times, and smarter decision-making on the road. But what happens when one of these self-driving cars actually crashes? Who is responsible? And under the rules taking shape in 2026, how does the law actually handle these situations?

These are not just hypothetical questions anymore. Self-driving vehicles are operating on public roads right now, and accidents have already happened. As the technology expands, the legal system has had to catch up — sometimes awkwardly, sometimes surprisingly quickly.

This article breaks down how liability works in autonomous vehicle crashes under the evolving legal framework of 2026, using plain language that anyone can follow.

What Makes Autonomous Vehicles Different From Regular Car Accidents

In a traditional car accident, figuring out fault is relatively straightforward. A driver ran a red light, someone was speeding, or a person was distracted. Human behavior sits at the center of almost every crash case.

With autonomous vehicles, that simple picture gets complicated fast. There may be no human driver actively controlling the car at the moment of impact. Instead, you have software making decisions, sensors interpreting the environment, hardware executing commands, and a manufacturer designing the entire system.

This shift raises questions that old traffic laws were never built to answer:

- Can software be negligent?

- Does the person sitting in the driver’s seat bear any responsibility if they weren’t actually driving?

- What if the system worked exactly as designed, but that design had a flaw?

- What if a software update pushed the night before the crash introduced a bug?

These are the questions courts, legislators, and regulators have been wrestling with — and the answers in 2026 look quite different from just a few years ago.

Understanding Levels of Automation and Why They Matter

Not all autonomous vehicles are created equal. The Society of Automotive Engineers (SAE) created a classification system ranging from Level 0 to Level 5, and where a vehicle falls on that scale has a huge impact on how liability is assigned.

- Level 0–2: The human driver is still in control. Assisted features like cruise control or lane centering exist, but the driver must remain engaged. In crashes involving these vehicles, traditional driver negligence rules still apply in most cases.

- Level 3: The vehicle can handle most driving tasks on its own, but the human must be ready to take over when prompted. This is a legal gray zone. If the car signals the driver to take control and they don’t respond in time, blame can shift back to the human occupant.

- Level 4: The vehicle operates autonomously within specific conditions without requiring human intervention. When it crashes in those conditions, the human occupant is largely removed from the liability picture.

- Level 5: Full autonomy in all conditions. No steering wheel, no pedals in many designs. Human liability for the driving itself is essentially eliminated.

Most of the interesting and contested legal cases in 2026 are centered on Level 3 and Level 4 vehicles, where the handoff between human and machine is still murky.

The Main Parties Who Can Be Held Liable

The Vehicle Manufacturer

When a self-driving car crashes and the technology itself is at fault, product liability law steps in. Manufacturers can be held responsible when their vehicle has a defect — whether that defect is in the hardware, the software, or the design of the system as a whole.

Under product liability theory, an injured person does not necessarily need to prove the manufacturer was careless. They just need to show that the product was defective and that defect caused the harm. This is called strict liability, and it has become a key legal tool in autonomous vehicle crash cases.

Several major automakers and technology companies have already faced lawsuits following crashes involving their autonomous systems. Settlements in these cases have largely stayed private, but courts have been willing to apply product liability frameworks to self-driving technology in a growing number of jurisdictions.

The Software Developer

In many autonomous vehicles, the car manufacturer and the company that built the self-driving software are different entities. A traditional automaker may license or partner with a technology firm to power the autonomous driving system.

When a software flaw causes a crash — a failure to recognize a pedestrian, an incorrect response to road conditions, a decision-making error in the algorithm — that software developer can be brought into the lawsuit. Separating exactly where the fault lies between hardware and software can be enormously complex, often requiring specialized technical experts to untangle.

The Fleet Operator or Ride-Share Company

Many autonomous vehicles in operation today are not privately owned. They belong to commercial fleets — ride-share services, delivery companies, and transportation networks. These businesses operate and deploy the vehicles, and they carry their own layer of responsibility.

If a company knew about a system malfunction and kept the vehicle running, failed to maintain it properly, or deployed it in conditions it wasn’t rated for, that company can face serious liability. In 2026, several states have passed specific rules requiring commercial operators of autonomous vehicles to carry minimum insurance coverage levels and maintain detailed operational logs.

The Human Occupant or Supervisor

Even in highly automated vehicles, the question of occupant responsibility has not fully disappeared. At Level 3 automation, a human who fails to respond to a takeover request from the vehicle can bear partial liability. In some states, failure to maintain basic attention while operating a Level 3 vehicle is treated similarly to distracted driving.

At higher automation levels, the occupant is far less likely to face liability for the driving itself — but could still be responsible for other factors, such as failing to report a known system defect or deliberately disabling safety features.

Third-Party Service Providers

Autonomous vehicles rely on more than just their onboard systems. They communicate with road infrastructure, use mapping data from outside providers, and receive updates from cloud-based systems. If inaccurate mapping data contributed to a crash, the company that provides that mapping service could potentially be held liable as well.

This web of potential defendants is one reason autonomous vehicle crash cases can become extremely complicated, expensive, and slow to resolve.

How 2026 Regulations Are Reshaping Liability

The regulatory environment around autonomous vehicles has changed substantially in recent years. At the federal level in the United States, new guidance from the National Highway Traffic Safety Administration (NHTSA) has pushed manufacturers to maintain detailed records of autonomous system decisions and crash data. This data must now be preserved and made available in litigation, which gives injured parties much better access to the evidence they need.

Several states have enacted their own autonomous vehicle laws that directly address liability. Key developments in 2026 include:

- Expanded manufacturer responsibility: In states including California, Arizona, and Texas, manufacturers who deploy Level 4 or Level 5 vehicles are presumed to be the primary responsible party in crashes that occur during autonomous operation.

- Mandatory insurance frameworks: Commercial autonomous vehicle operators are now required to carry higher minimum liability coverage, ensuring that injury victims are not left without a source of compensation.

- Data black box requirements: Similar to aviation, many jurisdictions now require autonomous vehicles to maintain detailed logs of the system’s decisions and sensor data in the moments before and during a crash.

- No-fault compensation mechanisms: Some states are experimenting with no-fault funds specifically for autonomous vehicle crashes, allowing injured parties to receive compensation more quickly without having to prove exactly who was at fault.

These changes are still evolving. Not every state has adopted a comprehensive framework, and the rules can vary significantly depending on where the crash occurred.

What Injured People Actually Need to Know

If you or someone you know is hurt in a crash involving an autonomous or partially autonomous vehicle, the steps you take immediately after the accident matter a great deal.

Document Everything

Just as with any car accident, gather as much evidence as possible at the scene. Photographs, witness contact information, and official police reports are essential starting points. With autonomous vehicles, there is an additional layer — note the make, model, and any identifying information about the vehicle’s automation level and operator if it belongs to a commercial fleet.

Preserve Your Right to Data

Autonomous vehicle manufacturers collect enormous amounts of data about how their systems operate. In an injury case, this data can be the key to proving what the vehicle was doing at the moment of the crash. An attorney experienced in autonomous vehicle law can send preservation letters quickly, preventing data from being deleted or overwritten before it can be used as evidence.

Understand That Multiple Parties May Be Involved

Unlike a typical two-car accident, your case may involve a manufacturer, a software company, a fleet operator, and possibly an individual as well. This is not necessarily a bad thing — it may mean there are more sources of compensation available. But it also means the case is likely to be more complex than a standard personal injury claim.

Work With an Attorney Who Understands Emerging Technology

Autonomous vehicle cases sit at the intersection of personal injury law, product liability, and cutting-edge technology. Not every attorney is equally prepared to handle them. Look for someone with experience in product liability and a willingness to work with technical experts who can analyze vehicle system data.

The Bigger Picture: Liability Law Catching Up With Technology

Autonomous vehicle technology has developed faster than the legal system can comfortably handle. That gap creates real challenges for people who are hurt in crashes involving these vehicles. The good news is that courts and lawmakers have been making genuine progress in closing that gap.

The core principles of personal injury law — that people who are harmed deserve compensation from those responsible — have not changed. What has changed is how we identify who is responsible when the driver is a machine.

In 2026, that responsibility is increasingly falling on the companies that build, program, and deploy autonomous vehicles. That is a significant shift, and it reflects a broader recognition that when technology causes harm, the businesses that profit from that technology should not be able to simply walk away from the consequences.

For anyone affected by an autonomous vehicle crash, understanding this shifting landscape is the first step toward protecting your rights. The law is still evolving, but it is moving in a direction that takes the rights of injured people seriously — and that matters.