The Federal Kids’ Online Safety Act – What Actually Made It Into the Final Bill

What Is KOSA and Why Does It Matter?

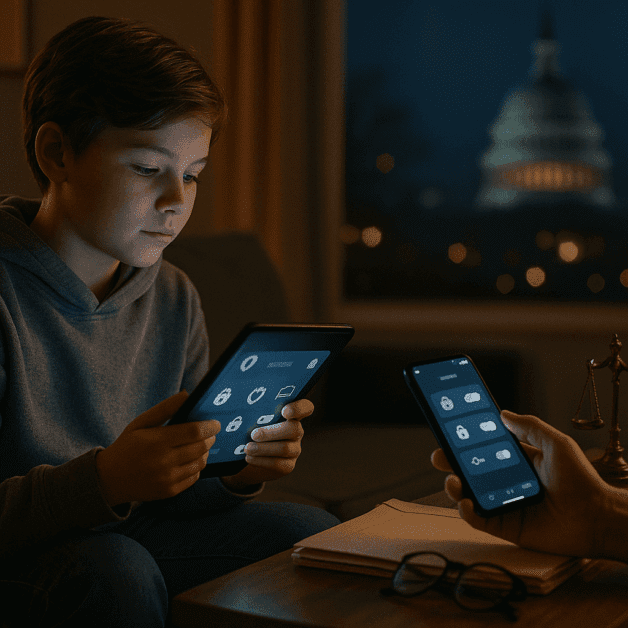

The Kids’ Online Safety Act, commonly known as KOSA, is a piece of federal law designed to protect children and teenagers from harm on the internet. After years of debate, public pressure, and multiple rounds of revision, the bill finally made its way through Congress and into law. If you have kids who use social media, play online games, or spend time on apps, this law affects your family directly.

Teen protection online has been a hot topic for years. Parents, doctors, and child safety advocates have long argued that social media platforms and other websites were not doing enough to keep young users safe. KOSA was written to change that — and the final version of the bill includes some real, enforceable rules that put the responsibility on tech companies rather than kids and parents alone.

The Core Goal of the Law

At its heart, KOSA is about making the internet safer for minors. The law targets platforms that are likely to be used by people under the age of 17. It requires these platforms to take active steps to reduce harm rather than simply waiting for problems to be reported. That shift — from reactive to proactive — is one of the biggest changes the law brings to internet regulation in the United States.

The law recognizes that teenagers are especially vulnerable online. Their brains are still developing, and many of them spend hours each day on apps that are specifically designed to keep them engaged. KOSA pushes back against that by requiring platforms to think about the wellbeing of young users when they design their products.

What Actually Made It Into the Final Bill

Earlier drafts of KOSA faced serious criticism from a wide range of groups, including civil liberties organizations and LGBTQ+ advocates who worried the law could be used to restrict access to helpful content for vulnerable teens. The final version of the bill made important changes to address those concerns. Here is a breakdown of what the law actually includes:

Duty of Care

The final bill requires covered platforms to act in the best interest of minors. This is called a “duty of care.” Platforms must take reasonable steps to prevent or reduce harms such as anxiety, depression, eating disorders, substance abuse, and suicidal behavior. This duty of care is one of the most significant features of the law because it creates a legal obligation for tech companies to consider the mental health of young users.

Default Safety Settings for Minors

One of the most practical changes in the law is the requirement for platforms to turn on the strongest privacy and safety settings by default for users who are minors. In the past, many apps launched with settings that maximized engagement and data collection. Under KOSA, those defaults must now prioritize safety. Teens should not have to dig through menus to protect themselves — the protection should be built in from the start.

Limits on Certain Features

The law also restricts some specific features that have been linked to harmful behaviors in young people. These include:

- Autoplay functions that keep videos running without the user choosing to continue

- Infinite scroll features that make it easy to lose track of time

- Notifications during certain hours, such as late at night

- Personalized recommendations that could push harmful content to minors

These features are not banned outright for adults, but platforms must limit or disable them for users who are under 17 unless a parent explicitly allows them.

Parental Controls and Transparency

KOSA gives parents more control over how their children use covered platforms. Companies must provide tools that let parents monitor screen time, set content limits, and review what their child is doing on the platform. Importantly, the law also requires that these tools be easy to use and clearly explained. In the past, parental control features often existed but were buried or confusing.

Data Privacy Protections

The final bill strengthened protections around data collection for minors. Platforms cannot collect, use, or share personal data from users under 17 for advertising purposes without following strict rules. This is a significant step in internet regulation because targeted advertising aimed at children has long been a concern for parents and privacy advocates.

The FTC’s Role in Enforcement

The Federal Trade Commission, or FTC, is the agency responsible for enforcing KOSA. The commission has the authority to investigate complaints, issue fines, and take legal action against companies that violate the law. Individual states also have the right to bring lawsuits on behalf of their residents under the law, which gives enforcement an extra layer of accountability.

What Was Removed or Changed From Earlier Versions

Earlier drafts of KOSA gave state attorneys general the power to decide what content was harmful to minors. Critics argued this could allow officials to target information about topics like sexual orientation, gender identity, or reproductive health. The final version removed that broad discretion and replaced it with a more specific list of harms tied to documented mental and physical health outcomes.

This was an important change. Rather than leaving “harmful content” up to individual interpretation, the law focuses on behaviors and outcomes — things like eating disorders, self-harm, and substance abuse. The result is a law that is more clearly defined and less likely to be used as a tool to censor legitimate information that teenagers may need.

Who Does KOSA Apply To?

The law applies to online platforms that are “likely to be used” by minors. This includes major social media sites, video streaming services, and gaming platforms. Smaller platforms and websites that are clearly not aimed at young users may be exempt, but the standards for determining who is covered are relatively broad.

Companies with fewer than a certain number of users may also qualify for reduced requirements, though the core safety obligations still apply. The law is not a one-size-fits-all rule, but it casts a wide enough net to cover the platforms where most teenagers spend their time.

How Does KOSA Compare to Other Countries?

The United States has often lagged behind Europe and other parts of the world when it comes to internet regulation for young people. The United Kingdom passed its Age Appropriate Design Code several years ago, requiring platforms to consider child safety by design. Australia has introduced similar rules. KOSA moves the U.S. closer to those international standards, though some experts say there is still more work to be done.

The law does not require age verification in the same way that some other countries have mandated. That remains a controversial and complicated issue, partly because strict age checks can raise privacy concerns of their own. KOSA focuses more on how platforms behave once a user is identified as a minor, rather than on how to confirm age in the first place.

What Comes Next?

Now that KOSA is law, the focus shifts to implementation and enforcement. Platforms will need time to update their systems, build new tools, and train their teams. The FTC will need to develop clear guidelines for what compliance looks like in practice. Parents and advocates will be watching closely to see whether the rules actually change how these companies operate.

There will almost certainly be legal challenges from tech companies or other groups who believe parts of the law go too far. Courts will play a role in shaping exactly how KOSA is applied over the coming years. That is a normal part of how major federal laws work, and it does not mean the law will be gutted — it simply means that the details will continue to be worked out over time.

The Bottom Line for Families

KOSA is a real step forward for teen protection online. It puts legal obligations on the companies that build and run the platforms where young people spend their time. It requires safer default settings, limits on manipulative design features, stronger privacy rules, and better tools for parents. It is not a perfect solution to every problem, and it will not eliminate all risks for young people online — but it changes the rules in meaningful ways.

For parents, KOSA means you will have more tools and more rights when it comes to protecting your kids online. For teenagers, it means the apps and platforms they use will be required by law to consider their wellbeing, not just their engagement. And for the tech industry, it means the era of building products for young users without serious accountability is coming to an end.