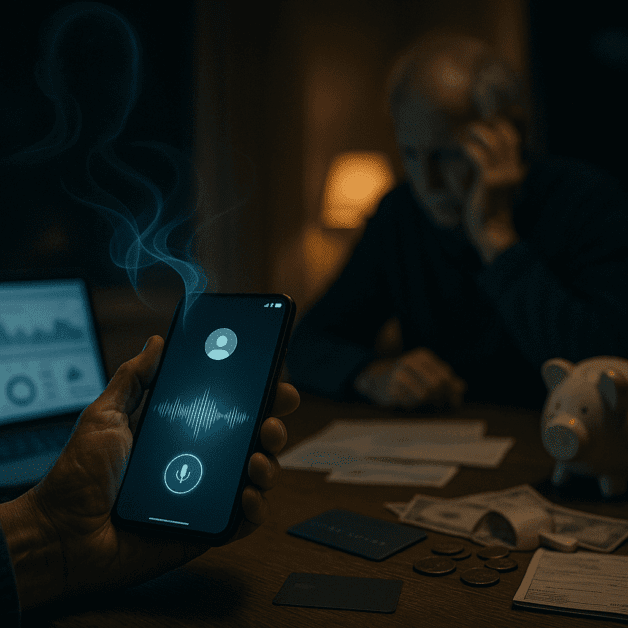

AI Voice Spoofing Fraud – How One 10-Second Call Stole a Retiree’s Life Savings

When a Voice You Trust Becomes a Weapon Against You

Imagine picking up the phone and hearing your grandson’s voice on the other end. He sounds scared. He says he’s been in an accident, that he’s in trouble, and that he desperately needs money right away. You don’t hesitate. You trust that voice completely. Why wouldn’t you? You’ve known it for decades.

But what if that voice wasn’t really him?

This is exactly what happened to a 76-year-old retired schoolteacher in Ohio. In less than ten seconds of audio cloned from a social media video, criminals used artificial intelligence to copy her grandson’s voice perfectly. They called her, ran an emotional script, and walked away with her entire life savings — over $22,000 — before she ever realized what had happened.

Stories like hers are no longer rare. They are happening every single day across the United States and around the world. Voice fraud powered by AI is quickly becoming one of the most dangerous and fastest-growing forms of elder fraud and cybercrime we have ever seen.

What Is AI Voice Spoofing and How Does It Work?

AI voice spoofing is a type of fraud where criminals use artificial intelligence tools to clone or copy a real person’s voice. Once they have a voice sample — even a very short one — they can use that sample to make the AI speak any words they want, in that same voice, with realistic emotion and tone.

Here is how the process typically works:

- Step 1 – Collecting a voice sample: The scammer finds a short audio or video clip of the target’s family member. This could be a Facebook video, a TikTok clip, a YouTube video, or even a voicemail. Just a few seconds of audio is often enough.

- Step 2 – Running it through an AI tool: The scammer uploads the sample into a voice cloning program. Many of these tools are cheap, easy to use, and widely available online.

- Step 3 – Creating a script: The criminal writes a story designed to trigger panic and urgency. Common scripts involve car accidents, arrests, medical emergencies, or being stranded in another country.

- Step 4 – Making the call: Using the cloned voice, the scammer calls the victim — usually an elderly parent or grandparent — and delivers the fake emergency story.

- Step 5 – Demanding money fast: The caller pushes for immediate payment, usually through wire transfer, gift cards, or cryptocurrency, because these methods are very hard to trace or reverse.

The entire process can be set up and executed within hours. And because the voice sounds so real, many victims never question it in the moment.

Why Older Adults Are Being Targeted

Elder fraud is not a new problem, but AI has made it far worse. Older adults are frequently targeted for several key reasons:

- They are more likely to have significant savings or retirement funds readily available.

- They may be less familiar with AI technology and less likely to suspect voice cloning is even possible.

- They tend to be more trusting of voices they recognize, especially family members.

- They are often more emotionally responsive to stories about loved ones being in danger.

- Isolation — which is common among retirees — can make urgent calls feel even more impactful.

According to the FBI’s Internet Crime Complaint Center, Americans over the age of 60 lost more than $3.4 billion to internet and phone-based fraud in 2023 alone. That number continues to grow, and voice fraud using AI is now considered one of the most serious contributors to that figure.

The Real Story: 10 Seconds That Changed Everything

Let’s go back to that retired schoolteacher in Ohio. Her name has been kept private to protect her dignity, but her story has been shared widely by fraud investigators and consumer protection advocates.

Her grandson had posted a short birthday video on Facebook about six months before the fraud took place. In the video, he laughed, said a few sentences, and thanked people for their birthday wishes. That ten-second clip was all the criminals needed.

When she received the call, she heard what she believed was her grandson’s voice telling her he had been in a car accident, that the other driver was injured, and that he had been detained by police. A second person then got on the line, claiming to be a lawyer, and told her that bail money needed to be paid immediately or her grandson would spend the night in jail.

She was told not to tell anyone in the family because it would embarrass him. She was told to buy gift cards and read the numbers over the phone. She did exactly that — four times — until her account was drained.

When she finally called her grandson to check on him, she discovered he was perfectly fine. He had no idea any of this had happened. The voice she heard had been entirely fake.

“I knew his voice,” she later told investigators. “I’ve known that voice since the day he was born. I would have bet my life it was him.”

In a terrible and heartbreaking way, she did.

How AI Abuse Is Making Cybercrime Easier Than Ever

For most of human history, fraud required some level of skill, patience, or access to special tools. AI has changed that completely. Today, voice cloning software is available online for as little as a few dollars per month. Some versions are even free.

These tools were originally developed for legitimate purposes — audiobook narration, accessibility features for people who have lost their voices, entertainment and gaming. But criminals quickly recognized how powerful they could be for deception.

The AI abuse problem goes beyond just voice cloning. Scammers are also using AI to:

- Generate fake identification documents and photos

- Write highly convincing phishing emails without spelling errors or awkward phrasing

- Create deepfake videos of real people saying things they never said

- Analyze large amounts of personal data stolen from data breaches to personalize scam attacks

What makes voice fraud particularly dangerous is that it attacks something most people have always relied on instinctively — the ability to recognize a familiar voice. That instinct, which has served humans well for thousands of years, is now being used against us.

Warning Signs That a Call Might Be a Scam

Even though these scams are very convincing, there are some common warning signs that something may not be right:

- Urgency and pressure: The caller insists you must act immediately and cannot wait.

- Secrecy: You are told not to tell other family members or friends about the situation.

- Unusual payment methods: Legitimate emergencies are almost never resolved through gift cards, wire transfers, or cryptocurrency.

- Emotional manipulation: The caller uses fear, guilt, or panic to push you into making quick decisions.

- Story details that don’t quite fit: The location, circumstances, or story elements might seem slightly off.

- No callback option: The caller discourages you from hanging up and calling back on a known number.

The most important thing anyone can do in this situation is pause. Take a breath. Hang up and call your family member directly using a number you already know. Even a two-minute delay can expose the entire scam.

How to Protect Yourself and Your Family

There are practical steps that everyone — especially older adults and their families — can take to reduce the risk of falling victim to voice fraud.

Create a Family Code Word

Agree on a secret word or phrase that only your immediate family knows. If someone calls claiming to be a family member in distress, ask them to say the code word. A voice-cloning AI won’t know it, and neither will any scammer.

Review Your Social Media Privacy Settings

Because voice samples are often pulled from publicly available videos, limiting who can see your family’s social media content reduces the risk. Set accounts to private and think carefully before posting videos of yourself or your children.

Talk to Elderly Family Members About These Scams

Many older adults don’t know that voice cloning technology exists. Simply telling them about it — and explaining that criminals can copy voices using a short video clip — can be enough to make them pause and question a suspicious call.

Never Pay Through Gift Cards or Wire Transfers in an Emergency

Real legal emergencies, bail situations, or hospital payments are handled through official channels. If anyone asks you to pay using gift cards, that alone is proof that something is wrong.

Report Suspicious Calls

If you receive a call you suspect is a scam, report it to the FTC at reportfraud.ftc.gov or call the FBI’s tip line. Reporting these calls helps investigators track patterns and protect others.

What Authorities and Technology Companies Are Doing

Law enforcement and technology companies are aware of the problem and working to address it, though the challenge is significant.

The Federal Trade Commission has issued multiple consumer alerts about AI voice scams. The FBI has published warnings specifically targeting grandparent scams powered by voice cloning technology. Some members of Congress have called for stronger regulations on AI voice tools, including requirements that companies add watermarks or digital identifiers to cloned audio.

Several technology companies are also developing tools that can detect AI-generated audio. These tools analyze tiny patterns in the sound wave that are too subtle for human ears to catch but reveal whether a voice is real or artificially generated.

Phone carriers are being pressured to improve call authentication systems that can flag suspicious or spoofed numbers before the phone even rings. Some of this infrastructure is already in place through a framework called STIR/SHAKEN, though enforcement remains inconsistent.

Still, experts are clear: technology solutions will take time. Right now, the most effective protection is awareness.

The Emotional Cost That Never Shows Up in the Statistics

When the media covers elder fraud, the focus is usually on the financial loss. And the numbers are devastating — thousands, sometimes hundreds of thousands of dollars stolen from people who worked their entire lives to save it.

But the emotional damage is often just as serious and far harder to measure.

Victims frequently report deep feelings of shame and embarrassment after being deceived. Many blame themselves, wondering how they could have been fooled. Some become afraid to answer the phone at all. Trust — in technology, in strangers, sometimes even in family members — can be permanently damaged.

The retired teacher in Ohio told advocates that what hurt her most wasn’t losing the money. It was the feeling that she had been made to believe something awful had happened to someone she loved deeply. That kind of emotional manipulation, she said, felt like a violation she couldn’t easily put into words.

“They used his voice against me,” she said. “That feels like the worst part of all of it.”

Final Thoughts: Staying One Step Ahead

AI voice spoofing fraud is a serious, growing, and deeply troubling form of cybercrime. It turns the most basic human instinct — trusting the people we love — into a vulnerability that criminals can exploit for profit.

But awareness is a powerful defense. Understanding how these scams work, knowing the warning signs, creating family safety plans, and talking openly about AI abuse can make a real difference in protecting vulnerable people from being targeted.

The technology behind these attacks will keep getting more sophisticated. But so can we. Take a moment today to talk to the older adults in your life. Share this information. Set up a family code word. Check those social media privacy settings.

One conversation now could save someone’s life savings — and protect them from the kind of heartbreak that no amount of money can ever fully repair.