This Is What Happens When AI Wrongly Accuses You of a Crime

When Technology Gets It Wrong

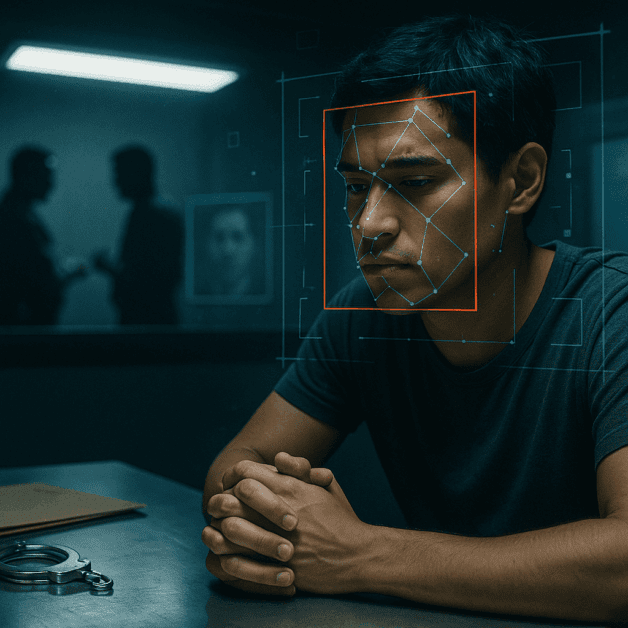

Artificial intelligence is being used more and more in law enforcement and criminal justice. Police departments, courts, and government agencies are turning to AI tools to help identify suspects, predict criminal behavior, and even assist in sentencing decisions. But what happens when these systems make a mistake? What happens when AI wrongly accuses an innocent person of a crime?

The answer is deeply troubling. A false accusation powered by AI can turn a person’s life upside down in ways that are difficult to fully understand until it happens to you. And unlike a mistake made by a single human officer, an AI error can move through the justice system quickly, carrying with it an air of scientific certainty that makes it even harder to challenge.

Real People, Real Consequences

This is not a theoretical problem. There have already been documented cases where AI systems have wrongly identified innocent people as criminal suspects. In the United States, facial recognition technology has been linked to the wrongful arrests of Black men, including Robert Williams in Michigan and Nijeer Parks in New Jersey. Both men were arrested based on faulty facial recognition matches. Both were innocent.

These men lost time. They lost money paying for legal defense. They suffered damage to their reputations. They experienced the fear, confusion, and humiliation of being treated as criminals for something they did not do. And they had to fight against a system that had, at least initially, placed its trust in a machine over a human being.

How AI Errors Happen in Criminal Justice

To understand why AI gets it wrong, it helps to understand how these systems work. Most AI tools used in criminal justice are trained on large amounts of data. They look for patterns in that data and use those patterns to make predictions or identifications. The problem is that the data itself is often flawed.

Here are some of the most common reasons AI makes errors in this context:

- Biased training data: If the data used to train an AI system reflects existing racial or social biases, the system will reproduce those biases in its outputs.

- Poor image quality: Facial recognition systems often struggle with low-resolution images, poor lighting, or photos taken at unusual angles.

- Overfitting: Some AI systems are trained too narrowly, meaning they perform well on test data but fail in real-world situations.

- Lack of context: AI systems often cannot account for the full context of a situation the way a human investigator can.

- Outdated data: Systems trained on old data may not reflect current realities or updated information.

These are not small or rare problems. They are built into how many of these systems currently work.

The False Sense of Authority

One of the most dangerous aspects of AI errors in criminal justice is how authoritative the results can appear. When a computer system flags someone as a suspect, it can feel like hard evidence. Officers, prosecutors, and even judges may be more likely to trust a data-driven result than a human opinion, even when that result is wrong.

This creates a serious problem for due process. Due process is the legal requirement that the government must respect all of a person’s legal rights before taking action against them. When an AI system produces a result that is treated as near-certain truth, it can short-circuit the careful, human-centered process that due process requires.

Defendants who are falsely accused based on AI evidence often face an uphill battle. They must try to challenge something that feels technical and complex, often without easy access to the inner workings of the system that accused them. In many cases, the companies that build these AI tools treat their systems as proprietary, meaning they do not have to share exactly how the technology works. This makes it nearly impossible for a defense attorney to properly challenge the evidence.

The Arrest Is Just the Beginning

When someone is wrongly accused by an AI system, the problems do not stop at the arrest. Consider what a person typically goes through after being taken into custody:

- Detention: A person may be held in jail while they wait for bail or a hearing. This can last hours, days, or even longer.

- Job loss: Missing work during detention or while dealing with legal proceedings can cost someone their job.

- Financial strain: Hiring a lawyer, posting bail, and taking time off work creates serious financial pressure.

- Reputation damage: Arrest records can be public, and news of an arrest can spread quickly, damaging a person’s reputation even before any charges are proven.

- Psychological harm: Being wrongly accused of a crime causes real emotional and psychological damage, including anxiety, depression, and trauma.

- Family disruption: A parent being arrested can affect their children and their family’s stability in lasting ways.

Even after charges are dropped or a person is found innocent, the damage is often already done. It can take years to rebuild what was lost.

Who Is Responsible When AI Gets It Wrong?

This is one of the most complicated questions in this entire issue. When an AI system causes a false accusation, who is to blame? Is it the company that built the technology? The police department that used it? The officer who acted on its results? The prosecutor who brought charges?

Right now, there are very few clear legal answers to these questions. Laws around AI accountability are still developing, and in many places, they simply do not exist yet. Companies that sell AI tools to law enforcement often include legal protections in their contracts that limit their liability when things go wrong. And government agencies can sometimes shield themselves behind existing legal doctrines.

This accountability gap is a serious problem. If no one is clearly responsible when AI causes harm, there is little incentive to fix the systems that cause that harm. The people who suffer the consequences are often left without any real avenue for justice.

What Needs to Change

Fixing this problem will not be easy, but there are steps that experts, advocates, and policymakers have pointed to as important starting points:

- Transparency requirements: Law enforcement agencies should be required to disclose when and how they use AI tools, and defendants should have the right to understand the AI evidence used against them.

- Independent audits: AI systems used in criminal justice should be regularly tested and audited by independent third parties to check for accuracy and bias.

- Human oversight: AI results should never be the sole basis for an arrest or conviction. A qualified human being must review and take responsibility for every decision.

- Legal accountability: Clear laws should establish who is responsible when AI causes harm and what remedies are available to victims.

- Stronger due process protections: Courts should recognize the unique challenges that AI evidence creates and put stronger safeguards in place to protect defendants’ rights.

- Moratoriums on high-risk uses: Some uses of AI, especially facial recognition in law enforcement, may need to be paused or banned entirely until the technology is proven to be accurate and fair.

The Human Cost Cannot Be Ignored

It is easy to talk about AI errors in abstract terms. But behind every statistic and every case study is a real person whose life was disrupted by a machine that got it wrong. These are people who went to work, came home to their families, and tried to live their lives without trouble. They were not caught up in the justice system because of anything they did. They were caught up in it because an algorithm made a mistake.

That is not a small thing. That is a failure of the systems we trust to protect us. And it is a reminder that technology, no matter how advanced it becomes, does not replace the need for fairness, accountability, and human judgment.

Staying Informed and Pushing for Change

For most people, the issue of AI in criminal justice can feel far away until it suddenly is not. Learning about how these systems work, following policy debates, and supporting organizations that advocate for civil liberties and fair treatment are all meaningful ways to engage with this issue.

The criminal justice system is supposed to operate on the principle that a person is innocent until proven guilty. That principle does not change just because a computer is involved. If anything, it becomes more important to protect, because the stakes of getting it wrong are just as high, and the risks of being fooled by false certainty are even greater.

AI has real potential to help make systems more efficient and even more fair in some contexts. But that potential means nothing if the technology causes innocent people to be arrested, charged, and harmed. Getting this right is not optional. It is a matter of basic justice.