The New Deepfake Impersonation Crime — and What It Costs to Break It

When a Fake Face Becomes a Real Crime

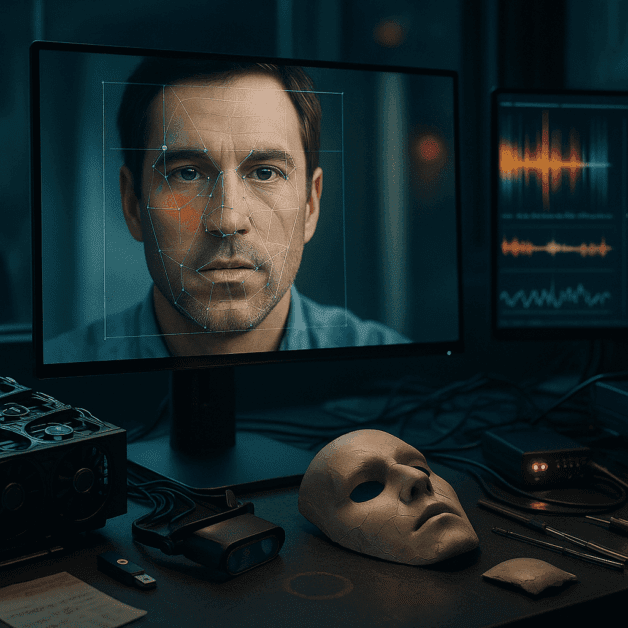

Not long ago, deepfakes were mostly seen as a tech curiosity — videos that made it look like celebrities were saying things they never said. But today, the technology has become sharp enough and cheap enough that ordinary people are using it to impersonate others for financial gain, harassment, and fraud. Governments are now catching up, and the legal consequences are growing serious.

If you have ever wondered whether creating or sharing a deepfake of someone could land you in real legal trouble, the short answer is: yes, it absolutely can. And depending on where you live and what the deepfake was used for, the penalties can be severe.

What Exactly Is Deepfake Impersonation?

Deepfake impersonation happens when someone uses artificial intelligence to create a realistic video, audio recording, or image that makes it appear as though a real person said or did something they never actually did. The goal is usually to deceive — whether for money, reputation damage, political manipulation, or personal revenge.

Common examples include:

- Fake audio clips of executives giving fraudulent financial instructions

- Video calls where a scammer appears as a trusted colleague or family member

- Non-consensual intimate images using someone’s face

- Fake political speeches designed to mislead voters

- Impersonation of public figures to promote scams or spread misinformation

The technology to create these fakes has become widely available. Free and low-cost tools now exist that can produce convincing results with minimal technical knowledge. That accessibility is a big part of why lawmakers have started treating this as an urgent problem.

How the Law Is Responding

The legal framework around deepfakes is still developing, but it is moving fast. Several countries and U.S. states have passed or are in the process of passing specific deepfake laws that create new criminal categories for this kind of behavior.

In the United States, federal legislation like the Defiance Act and the NO FAKES Act have been introduced or passed to address specific aspects of deepfake misuse, particularly non-consensual intimate images and voice or likeness cloning. At the state level, more than a dozen states have enacted their own laws targeting deepfake fraud, election interference, and sexual exploitation.

Other countries have also acted. The United Kingdom updated its Online Safety Act to include deepfake sexual images. South Korea passed dedicated legislation making deepfake pornography a criminal offense. Australia has incorporated deepfakes into its existing harassment and image-based abuse laws.

Criminal Liability: When Does It Apply?

Not every deepfake is automatically a crime. Context matters a great deal. A deepfake made for obvious satire or artistic commentary is treated very differently from one designed to deceive or harm. Criminal liability typically kicks in when the deepfake is used with intent to:

- Defraud someone financially — such as impersonating a CEO to trick a company into transferring money

- Harass or harm a specific individual — particularly in cases of intimate image abuse

- Interfere with an election — by creating false statements attributed to candidates or officials

- Defame a person — spreading damaging falsehoods presented as real

- Impersonate someone to gain access or benefits — such as bypassing identity verification systems

Courts and prosecutors look at intent, impact, and audience when determining whether a deepfake crosses the line from creative expression into criminal conduct.

The Real Criminal Penalties

This is where things get very concrete. The penalties attached to deepfake-related crimes vary depending on the jurisdiction and the nature of the offense, but they are not light.

In the United States

Deepfake fraud connected to financial crimes can be prosecuted under existing wire fraud statutes, which carry penalties of up to 20 years in federal prison. When deepfakes are used in cases involving organized crime or large-scale financial damage, additional charges can push sentences even higher.

For non-consensual intimate deepfakes, state laws typically impose penalties ranging from misdemeanor charges with fines and up to one year in jail to felony charges with prison sentences of two to five years, depending on the severity and whether the images were distributed widely.

Election-related deepfakes are increasingly being treated as serious offenses. Some states impose criminal penalties of up to five years in prison for distributing fabricated media designed to influence an election within a specified period before voting day.

In the United Kingdom

Under the Online Safety Act, sharing non-consensual deepfake intimate images is a criminal offense. Penalties can include prison sentences, and the law was specifically updated to close loopholes that previously made prosecution difficult.

In South Korea

South Korea has some of the toughest deepfake laws currently in effect. Creating and distributing deepfake sexual content can result in up to five years in prison or fines of up to 50 million Korean won. Possession of such content, even without distribution, carries its own penalties.

Financial Costs Beyond Criminal Penalties

Criminal penalties are only part of the picture. Victims of deepfake impersonation can also pursue civil lawsuits, which means the person responsible may face significant financial damages on top of any criminal sentence.

Civil claims often include:

- Compensatory damages — covering the actual harm caused, such as lost income, therapy costs, or reputation repair

- Punitive damages — additional financial penalties meant to punish particularly harmful or malicious behavior

- Injunctive relief — court orders requiring the immediate removal of deepfake content

In high-profile corporate fraud cases where deepfakes were used to authorize large financial transfers, the financial liability has reached into the millions. A well-known case in Hong Kong saw a company lose approximately $25 million after employees were deceived by a deepfake video call impersonating company executives.

Who Can Be Held Responsible?

One important question in deepfake law is who exactly faces liability. It is not always just the person who created the fake. Responsibility can extend to:

- The creator — the person who used AI tools to generate the deepfake

- The distributor — anyone who shared, published, or spread the content knowing what it was

- The platform — in some jurisdictions, online platforms that fail to remove reported deepfake content in a timely manner face their own regulatory consequences

- The commissioner — someone who paid or directed another person to create the deepfake for a harmful purpose

This broad reach means that even people who did not personally make the deepfake can face criminal charges if they were knowingly involved in its creation or distribution.

Defenses That Have Been Raised — and Their Limits

People accused of deepfake-related crimes have tried various defenses. Some of the most common include:

- Parody and satire — arguing the content was clearly not meant to be taken as real

- Free speech — particularly in U.S. cases, where First Amendment arguments arise

- Lack of intent — claiming there was no purpose to deceive or harm

- Consent — arguing the person depicted agreed to the use of their likeness

These defenses have met with limited success when the content was designed realistically and distributed in contexts where viewers would likely believe it was genuine. Courts have been increasingly skeptical of claims that realistic impersonation content was just a joke, especially when harm resulted.

What This Means for Everyday People

Most people interacting with deepfake tools are not thinking about criminal liability. They may be using an app that swaps faces in a video for fun or experimenting with AI voice cloning tools. But the line between casual experimentation and criminal conduct can be crossed more easily than many realize.

Here are some practical things to keep in mind:

- Creating a realistic fake of a real person without their consent — even without distributing it — can carry legal risk in some jurisdictions

- Using someone’s voice or likeness in a way that could deceive viewers into thinking the content is real is the core of most deepfake laws

- Sharing content you know to be fake, particularly if it damages someone’s reputation or safety, can make you legally responsible even if you did not create it

- Deepfake tools themselves are not illegal — but how they are used determines whether a crime has been committed

The Road Ahead for Deepfake Law

Deepfake law is still being written. Courts are working through edge cases, legislators are updating statutes as the technology evolves, and international coordination is gradually improving. What is clear is that the era of treating deepfakes as a harmless novelty is over.

Governments around the world are treating deepfake impersonation as a genuine threat — to individuals, to financial systems, and to democratic processes. The criminal penalties now attached to this behavior reflect that seriousness. As detection tools improve and public awareness grows, prosecution rates are likely to increase as well.

For anyone who uses or encounters deepfake technology, understanding the legal boundaries is no longer optional. The consequences of crossing them are real, significant, and growing.