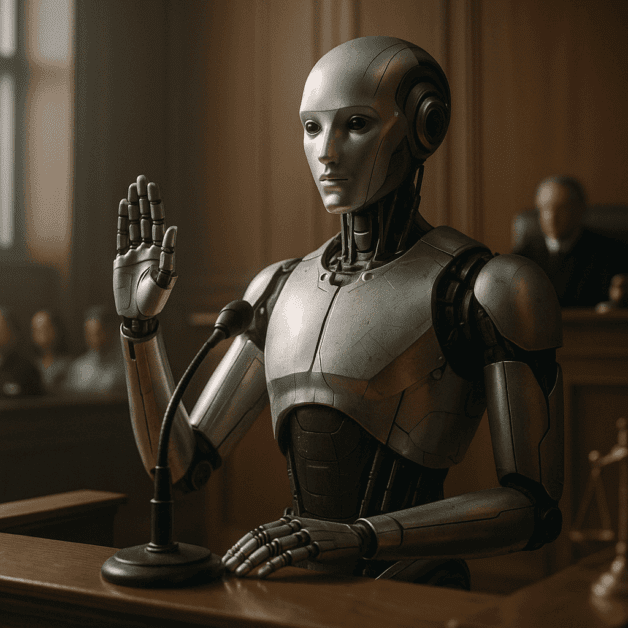

Can AI Testify in Court? A Judge Just Said Yes.

A Legal First That Has Everyone Talking

Courts have seen all kinds of evidence over the years — handwritten notes, security footage, DNA samples, and even social media posts. But something new just happened that is turning heads across the legal world. A judge has ruled that AI-generated content can be used as testimony in a courtroom. This is a moment that legal experts, tech professionals, and everyday people are paying close attention to, and for good reason.

The question of whether artificial intelligence can “speak” in a court of law is no longer just a thought experiment. It is now a real legal issue with real consequences. So what does this actually mean? And should we be worried — or impressed?

What Exactly Happened in the Courtroom?

In a recent case, a judge allowed AI-generated analysis to be presented as part of the evidence in a legal proceeding. The AI system in question had processed large amounts of data and produced findings that were considered relevant to the outcome of the trial. Rather than throwing the material out, the judge decided it met the standard for legal admissibility.

This does not mean the AI sat in a witness chair and answered questions. What it means is that the output produced by an AI system — its conclusions, patterns, and data interpretations — was accepted as credible and useful information for the court to consider. That alone is a significant step forward in how courts treat technology.

Why Legal Admissibility Matters So Much

For any piece of evidence to be used in a trial, it has to pass a set of legal tests. Courts ask whether the evidence is reliable, whether it is relevant to the case, and whether it was gathered in a fair and lawful way. These standards exist to protect everyone involved in a legal dispute.

When it comes to AI testimony and courtroom evidence, the same rules apply — but with new questions added on top. Legal experts now have to consider things like:

- How was the AI trained, and on what kind of data?

- Can the AI’s reasoning be explained clearly to a jury?

- Is there a risk that the AI produced biased or incorrect results?

- Who is responsible if the AI gets something wrong?

These are not easy questions. But the fact that a judge has already said yes to AI-generated evidence means the legal system is now actively working to find the answers.

How AI Evidence Is Different From Traditional Evidence

Traditional witnesses are people who saw or heard something and can speak to their experience directly. They can be cross-examined, challenged, and held accountable for what they say. AI systems are different in very clear ways.

An AI does not have personal experience. It processes data according to its programming and produces an output. It cannot be sworn in, it does not have emotions, and it cannot be pressured or intimidated. On one hand, that sounds like it could make AI more objective. On the other hand, it raises serious questions about accountability and transparency during trial procedure.

If an AI makes a mistake that affects the outcome of a trial, who answers for that? The developer? The lawyer who introduced the evidence? The company that sold the software? Right now, there are no clean answers. But courts are beginning to build a framework to address exactly these kinds of situations.

What the Legal Community Is Saying

Reactions from lawyers and legal scholars have been mixed. Some see this as a natural and necessary evolution of how courts handle modern evidence. They argue that AI can process information far more quickly and accurately than a human expert in certain areas, and that keeping it out of the courtroom would actually put justice at a disadvantage.

Others are more cautious. They worry that juries might place too much trust in AI-generated conclusions simply because they come from a computer. There is a long history of people assuming that technology is always right. In a courtroom, that kind of thinking can be dangerous.

Some attorneys are also raising concerns about fairness. Not every defendant has access to the best AI tools or the legal teams who know how to challenge AI-generated evidence effectively. If one side in a trial can afford sophisticated AI analysis and the other cannot, that creates an imbalance that strikes at the heart of what a fair trial is supposed to be.

Real-World Cases Where AI Evidence Could Play a Role

To understand why this matters, it helps to think about the kinds of cases where AI evidence might show up. Here are a few examples:

- Financial fraud cases: AI systems can analyze millions of transactions and identify suspicious patterns that would take human analysts months to find.

- Criminal investigations: AI tools can process surveillance footage, phone records, and online activity to establish timelines or connections between individuals.

- Medical malpractice: AI can review patient records and compare treatment decisions against established medical standards.

- Employment discrimination: AI can analyze hiring data across large organizations to identify whether certain groups were systematically treated differently.

In each of these cases, AI evidence could genuinely help reveal the truth. But in each case, there is also room for error, misuse, or misinterpretation. That is why the legal standards surrounding AI testimony need to be carefully developed over time.

The Role of Human Experts Alongside AI

One thing most legal professionals agree on is that AI should not operate in isolation inside a courtroom. Human experts still play a critical role. An AI might identify a pattern in data, but a qualified human expert is needed to explain what that pattern means, why it matters, and what its limitations are.

This combination — AI’s processing power alongside human judgment and accountability — seems to be the direction courts are heading. The AI handles the heavy lifting of data analysis, while a human expert takes responsibility for presenting and defending the conclusions in front of a judge and jury.

This approach also helps address the transparency problem. When a human expert testifies, they can be questioned about their methods and conclusions. When AI is part of that process, the expert must also be able to explain how the AI works, what assumptions it made, and where its conclusions could be wrong.

What This Means for the Future of Trial Procedure

This ruling is likely just the beginning. As AI becomes more capable and more widely used, courts around the world will face increasing pressure to decide how to handle AI-generated evidence. Some countries may embrace it quickly. Others may hold back until clearer guidelines are in place.

In the United States, trial procedure is shaped by a mix of federal and state rules, court decisions, and ongoing legal debate. The admissibility of AI testimony will likely be tested many more times before a consistent standard emerges. Each new case will add to the body of legal knowledge about how AI fits into the justice system.

Legal scholars are already calling for updated evidence rules that specifically address AI. They want courts to require disclosure about how AI systems work, what data they were trained on, and what potential biases might exist. Transparency, they argue, is the key to making AI evidence fair and trustworthy.

Should the Public Be Concerned?

It is completely reasonable to feel uncertain about AI playing a role in legal decisions. The stakes in a courtroom are high — people’s freedom, money, and reputations are on the line. The idea of a computer system influencing those outcomes is understandably unsettling for many people.

At the same time, it is worth remembering that the legal system has always adapted to new forms of evidence. There was a time when fingerprint analysis was controversial in court. DNA evidence was once met with skepticism. Each time, the legal system developed ways to evaluate new evidence fairly and responsibly.

AI is simply the next frontier. The key is making sure that the rules around AI testimony are built with care, with input from legal experts, technologists, and the public, and with a strong commitment to fairness and accuracy.

The Bottom Line

A judge saying yes to AI testimony in court is a landmark moment. It signals that the legal system is no longer ignoring the reality of artificial intelligence — it is beginning to engage with it directly. That is an important step, even if it raises more questions than it answers right now.

The conversations happening in courtrooms, law schools, and legislative chambers today will shape how justice works in the age of AI. What matters most is that those conversations stay focused on one thing: making sure that every person who enters a courtroom gets a fair, honest, and accurate hearing — no matter what tools are used to get there.