Synthetic Influencers Are Now Legally Different From Real Ones — Here’s How

The Rise of Synthetic Influencers

Not long ago, every face you saw promoting a product online belonged to a real person. That is no longer the case. Today, brands are using AI-generated characters, virtual avatars, and computer-made personalities to sell everything from skincare to sneakers. These are called synthetic influencers, and they are becoming a serious part of digital marketing.

But as their popularity grows, so does the legal conversation around them. Regulators, lawmakers, and consumer protection agencies are starting to draw clear lines between synthetic media personalities and real human influencers. Those lines carry real consequences for brands, platforms, and the creators who build these digital figures.

What Counts as a Synthetic Influencer?

Before diving into the legal differences, it helps to understand what we mean by a synthetic influencer. In simple terms, a synthetic influencer is a digital personality that does not exist as a real human being. These can include:

- Fully AI-generated characters with no human behind them

- Computer-animated avatars operated by a real person using a virtual persona

- Deepfake versions of real celebrities used without their consent

- AI-voiced characters that simulate human conversation and endorsement

Some of the most well-known examples include virtual influencers like Lil Miquela, who has millions of followers but is entirely computer-generated. Others are less obvious, blurring the line between what is real and what is manufactured. That blurriness is exactly what regulators are trying to address.

How FTC Rules Are Evolving to Cover Synthetic Media

The Federal Trade Commission, commonly known as the FTC, has long required influencers to disclose when they are being paid to promote a product. The core idea is simple: consumers deserve to know when they are seeing advertising rather than a genuine personal recommendation.

For years, those rules focused on human influencers. But the FTC has been updating its guidelines to address the growing use of synthetic media in marketing. Here is what has changed:

- Disclosure of AI origin: Brands must now clearly indicate when an influencer is AI-generated or not a real person. Simply calling a character a “virtual influencer” in a bio may not be enough.

- Stronger language requirements: Vague labels like “digital” or “avatar” are being pushed aside in favor of plain-language disclosures such as “This is an AI-generated character” or “Not a real person.”

- Platform-level accountability: Social media platforms that host synthetic influencer content may also face pressure to enforce disclosure standards alongside individual brands.

The FTC has made it clear that the spirit of its endorsement guidelines applies whether the face promoting a product is human or artificial. Deception is deception, regardless of the medium.

Disclosure Requirements: What Brands Must Do Differently

One of the biggest legal distinctions between real and synthetic influencers comes down to disclosure requirements. Real human influencers are expected to say things like “#ad” or “#sponsored” when they are paid to promote something. Synthetic influencers face an additional layer of required transparency.

Brands working with synthetic media personalities now need to consider the following:

- Dual disclosure: The content must disclose both the commercial relationship and the artificial nature of the influencer. One without the other is not enough.

- Placement of disclosures: Disclosures must appear prominently and early in the content, not buried in fine print or hidden at the bottom of a caption.

- Consistency across platforms: If a synthetic influencer is active on multiple platforms, the disclosure standards must be applied consistently everywhere, not just on some channels.

- Ongoing updates: As AI tools evolve and new types of synthetic media emerge, legal teams must stay current with updated FTC guidance to avoid violations.

Failing to meet these requirements can result in FTC investigations, fines, and serious reputational damage for a brand. The risk is real, and regulators are paying attention.

The Question of Liability

With real influencers, liability is relatively straightforward. If a human influencer makes a false claim about a product, both the influencer and the brand can be held responsible. The situation with synthetic influencers is more complicated.

Since a virtual character cannot be sued or held personally accountable, all legal responsibility shifts to the humans and companies behind it. This means:

- The brand that hires or creates the synthetic influencer carries full liability for what that character says or implies

- The developers or agencies that build and manage synthetic influencers may also share responsibility if they knowingly participate in deceptive campaigns

- Platforms that allow synthetic influencer content without proper disclosures could face regulatory scrutiny as well

This shift in liability is significant. It means there is no one to point the finger at except the business itself, which raises the stakes considerably for companies that cut corners on disclosure or use AI personas to mislead consumers.

State-Level Laws Are Also Getting Involved

Federal guidelines from the FTC are just one piece of the puzzle. Individual states are beginning to pass their own laws that affect synthetic media and digital influencers. California, for example, has passed legislation targeting the use of deepfakes in political advertising and has expanded protections for people whose likenesses are used without permission.

These state-level rules add another layer of legal complexity for brands operating across the country. What is acceptable in one state may be illegal in another, and the patchwork of regulations is only expected to grow as AI-generated content becomes more common.

Brands and legal teams need to track both federal and state-level developments to stay compliant. Ignoring state laws because federal guidance seems sufficient is a dangerous approach in today’s rapidly changing legal environment.

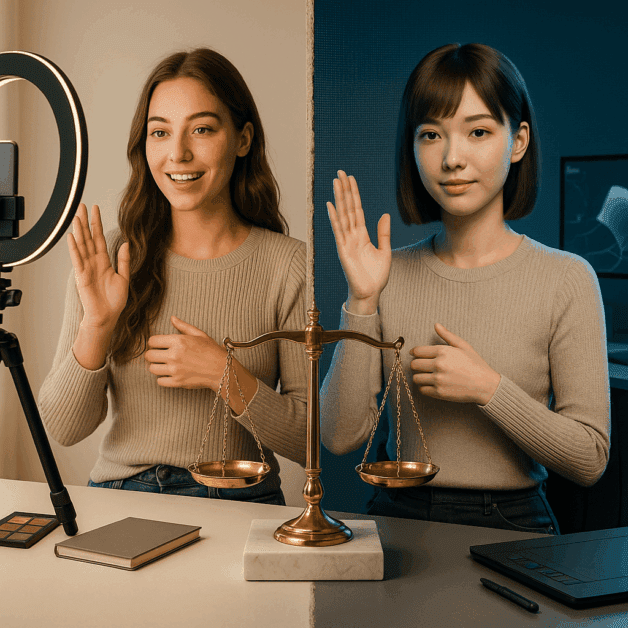

Real Influencers vs. Synthetic Influencers: A Side-by-Side Look

To put it simply, here is how the legal treatment of real and synthetic influencers differs in practical terms:

- Identity disclosure: Real influencers must disclose paid relationships. Synthetic influencers must disclose both the paid relationship and the fact that they are not real people.

- Personal liability: Real influencers share legal responsibility with brands. Synthetic influencers have no legal standing, so brands bear all responsibility.

- Consent and likeness rights: Real influencers control their own image and likeness. Synthetic influencers require brands to ensure they are not mimicking real people without permission.

- Trust signals: Real influencers rely on personal credibility. Synthetic influencers rely entirely on the transparency and honesty of the brand managing them.

Why This Matters for Consumers

At the heart of all these legal changes is a simple concern: consumer protection. When people scroll through social media and see a personality recommending a product, they make judgments based on who that person is and whether they trust them. If that “person” is actually a computer-generated figure, the consumer deserves to know that before they make a purchase decision.

Studies show that many consumers are not aware of how widespread synthetic influencers have become. Without clear legal requirements and strong enforcement, it would be easy for brands to use AI characters to create a false sense of authenticity and personal endorsement. The updated rules are designed to prevent that kind of manipulation.

What Brands Should Do Right Now

If your brand is using or considering using synthetic influencers, here are some practical steps to stay on the right side of the law:

- Review current FTC guidelines on endorsements and make sure your synthetic influencer campaigns meet the latest standards

- Work with legal counsel who understand both advertising law and the specific challenges of synthetic media

- Create clear, plain-language disclosures for all content featuring AI-generated personalities

- Audit existing campaigns to identify any content that may not meet current disclosure requirements

- Stay updated on state-level laws in every market where your synthetic influencer content appears

- Build a process for reviewing and updating disclosure practices as regulations continue to evolve

The Road Ahead for Synthetic Media and Influencer Law

The legal landscape around synthetic influencers is still developing. Regulators are moving faster than many expected, but technology is moving even faster. New tools for generating realistic AI personalities are released regularly, and the line between real and synthetic continues to blur.

What is clear is that the legal treatment of synthetic influencers will continue to diverge from that of real human influencers. The core principles of honesty, transparency, and consumer protection are not going away. If anything, they are becoming more important as AI-generated content becomes harder to distinguish from authentic human expression.

Brands that get ahead of these changes by building strong disclosure practices and staying current with evolving regulations will be better positioned for long-term success. Those that treat synthetic influencers as a shortcut to avoid the accountability that comes with real human partnerships may find themselves facing serious legal and reputational consequences down the road.

The message from regulators is consistent and getting louder: synthetic or not, honesty in advertising is not optional.